The Problem That Architectures Were Trying to Solve

The central challenge facing processor architects in the 1960s and 1970s was the semantic gap: the distance between the high-level operations expressed in programming languages (loop constructs, procedure calls, indexed array accesses) and the primitive operations available in hardware. The prevailing assumption was that closing this gap through richer hardware instructions would improve performance, since each high-level operation could be expressed as a single, short instruction sequence rather than an expanded series of primitive operations.

This assumption justified the accumulation of increasingly complex instruction sets across successive processor generations. The Digital Equipment Corporation VAX-11 architecture, introduced in 1977, exemplified the mature CISC philosophy: its instruction set featured over 300 unique opcodes, variable-length instruction encodings ranging from 1 to 57 bytes, memory-to-memory operations, and addressing modes capable of performing full arithmetic as part of address calculation. A single VAX instruction could, in principle, execute a complete loop iteration including memory access, arithmetic, comparison, and conditional branch.

The Empirical Challenge to CISC

The systematic academic critique of the CISC philosophy emerged from compiler studies conducted at IBM Research and at the universities of Berkeley and Stanford in the late 1970s. The central finding was counterintuitive: compilers generating code for complex architectures overwhelmingly used a small subset of the available instruction set. Studies of VAX assembly output revealed that roughly 10 instructions accounted for approximately 80% of all executed instructions, with the majority of complex addressing modes and multi-operation instructions rarely or never appearing in compiler-generated code.

The implication was significant: the transistor area and design complexity devoted to implementing hundreds of rarely-used instructions was reducing the chip budget available for the execution units, register files, and cache memories that governed actual computational throughput.

"The goal of RISC is not to have few instructions; the goal is to allow the compiler to exploit the hardware easily and predictably." — David Patterson, University of California Berkeley, 1980

The RISC Research Projects

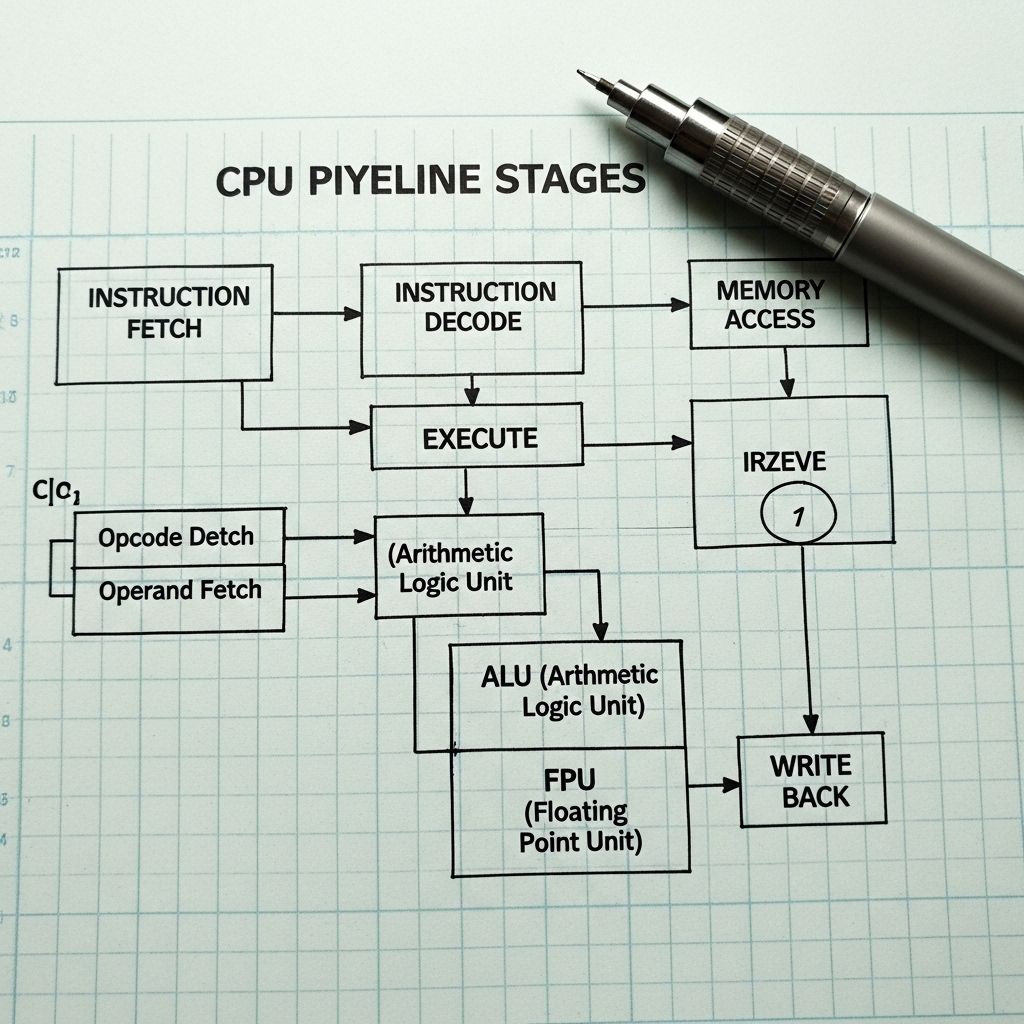

The RISC research programme at Berkeley, led by David Patterson and Carlo Séquin, produced the RISC I processor in 1982 — a design with 44,500 transistors, 31 instructions, and a novel register window mechanism to accelerate procedure calls without memory access. Concurrently, John Hennessy at Stanford led the MIPS project (Microprocessor without Interlocked Pipeline Stages), which demonstrated that a carefully designed simple pipeline, free of hardware interlocking logic, could sustain single-cycle execution across most instructions when supported by an intelligent compiler.

Both projects embodied the core RISC principles: a fixed instruction width (32 bits in both cases), a load-store memory access model (only dedicated load and store instructions access memory; all other operations use register operands), a large general-purpose register file (32 registers in MIPS), and an instruction set simple enough to be decoded combinatorially within a single clock cycle.

| Property | CISC (VAX-11/780) | RISC (MIPS R2000) |

|---|---|---|

| Instruction count | 300+ | ~55 |

| Instruction length | Variable, 1–57 bytes | Fixed, 32 bits |

| Memory access | Any instruction | Load/Store only |

| General registers | 16 (some implicit) | 32 (explicit) |

| Pipeline stages | Variable (microcode) | 5 (fixed) |

| Addressing modes | 22 | 1 (base + offset) |

Commercial RISC Implementations

The transition from academic research to commercial silicon proceeded rapidly through the 1980s. Sun Microsystems' SPARC architecture (1987), based directly on the Berkeley RISC research, powered the workstation market. The MIPS architecture (R2000/R3000, 1985–1988) established itself in workstations, embedded controllers, and graphics subsystems. Hewlett-Packard's Precision Architecture (PA-RISC, 1986) and IBM's POWER architecture (1990) brought RISC design into the high-performance server market, displacing minicomputer CISC architectures in technical computing applications.

The x86 architecture — the dominant CISC design — demonstrated remarkable competitive resilience through the application of RISC execution techniques within a CISC decoding wrapper. Beginning with the Intel P6 microarchitecture (Pentium Pro, 1995), x86 processors translated variable-length macro-instructions into fixed-width micro-operations (µops) before issuing them to an out-of-order superscalar execution engine structurally similar to a RISC core. The CISC instruction set was thus preserved as a binary compatibility layer above a RISC execution substrate — a pragmatic engineering resolution to a theoretical debate.

The ARM Paradigm and the Present Landscape

The ARM architecture, developed at Acorn Computers in Cambridge during the mid-1980s under the design of Sophie Wilson and Steve Furber, began as a direct implementation of the Berkeley RISC principles. Its combination of a simple 32-bit instruction set, low transistor count, and exceptional power efficiency made it the dominant architecture for battery-constrained mobile devices from the mid-1990s onward.

The 64-bit ARMv8 instruction set, introduced in 2011 and widely deployed from 2014, brought ARM-architecture processors into the server and laptop markets. By 2024, processors based on the ARM instruction set architecture accounted for the majority of all processors manufactured globally by unit volume, executing in devices ranging from embedded microcontrollers with thousands of transistors to high-performance server chips with over 100 billion transistors. The RISC vs. CISC architectural distinction, once a heated academic and commercial debate, has been subsumed into a more nuanced landscape of heterogeneous multi-architecture SoC design.